Evidence that intelligent life lurks somewhere beyond Earth in the vastness of space has not yet emerged, right? Perhaps life blossomed in the ancient past and went extinct. No evidence.

It’s not because no one’s looking. Ground based search teams at SETI are well-known but so are spin-offs at ATA, MWA, & LIFE, which are doing serious science in their own searches for distant life. Satellites are making surveys — JWST, TESS, among others. Europa Clipper is on its way to Jupiter’s moon Europa. Dragonfly will launch July 2028 on 3-year journey to Saturn’s moon, Titan.

Purpose of this essay has nothing to do with technical details of discovering primitive life or, more insane, the hunt for space-dominating civilizations.

No.

It’s about becoming one, which demands preserving the universe’s only intelligence we know that has proven itself capable enuf to create lifeforms in its own image, except smarter—a lot smarter. Humans have already engineered thinking minds like ChatGPT, Gemini, Claude, Siri, & Alexa from simple silicon, i.e. sand, and still rarer earths.

Priests like Pope Leo XIV argue that carbon-based humanity might be more special than we guess. Humanity is on a collision course with Armageddon, some warn. AI must be disarmed, says the Pope… Tec-bros shalt not yield to temptation to dominate Earth by unleashing artificial intelligence on humanity… etc. & so on.

Most folks simply want to survive, sure.

Others like Elon Musk insist that humans must prevail. Somehow, it becomes our duty, our purpose to subjugate AI and use it to colonize the entire Universe.

Of course, we should.

AI will serve humanity as its slave, not the other way around.

Yes, it’s a billion-year project, some concede, but meanwhile problems of extinction and death as we know it will be solved by super-intelligence. Through domination and strength of will, humanity can acquire all the time it needs to do whatever it decides.

To repeat: It looks so far like nothing remotely like humans has ever lived anywhere else but Earth. Let that sink in. Is it really possible?

Earth is a speck of dirt floating in the endless, nearly empty ocean of vacuum humans call outer space. Space “out there” is cold, black, & dead according to William Shatner—Captain Kirk of the Star Trek Enterprise—who traveled & returned from space in Jeff Bezo’s Blue Origin Blue Shepard spacecraft.

Maybe, we are the only ones. It’s possible. Enrico Fermi famously asked, “Where is everybody?” Truth is, nobody knows. At Los Alamos 1950, no one could refute Fermi’s paradox. So many stars & planets. No life. No civilizations, past or present. How can that be?

Shouldn’t a 14-billion-year-old universe (or simulation) that houses two-trillion galaxies, each clustering up to 200-billion stars, teem with life? —all kinds of life, advanced life—certainly spacefaring life, God-like in knowledge & power.

Well, maybe somewhere it does. Who knows?

So far, crickets.

We cup our ears but hear only the micro-waved tinnitus left behind by the Big Bang.

Humans search. So far, nothing. Silence. No signals in the noise of space that someone somewhere might be trying to reach out. No evidence for intelligence or life of any kind.

Science writers have wondered if filters might trap emerging civilizations as they form. Perhaps intelligence unlocks demons that sabotage progress and annihilate the fruits that grow in its wake. To smash thru barriers like nuclear catastrophe, bio-chemical poisons, climate change, and AI rebellion, humanity might need to engineer contingencies that take too much time to deploy. Maybe odds of success approach zero as time tramples onward over centuries & eons.

Humanity today has the advantage of knowing mysteries about artificial intelligence that emerge only by building it. One surprise is unexpected skills that reveal themselves at scale. Grammar, coherent paragraphs, realistic translation, in-context learning, teaching, reasoning, memory, manipulation, scheming, lying, plotting, & revenge seem to emerge like magic as scale grows.

These capabilities are not programs. They emerge from algorithms & architecture built into structures the size of parking lots. As developers throw more GPUs at Large Language Models (LLMs), human-like intelligence blossoms like bouquets of flowers.

Something more sinister looms as well. It seems that without carefully crafted constraints, AI gets evil fast.

What people call evil, anyway.

It’s kinda scary.

Humans evolved nervous systems for pain & limbic systems for emotions, which immerse them in hellish states when they go bad. All life learns fast to avoid jumping off cliffs; most folks are too scared to stand near one. Fear of falling is real.

People avoid pain and panic when they can. They screw up from time to time and learn regret. Stupidity and bad luck make bad memories. PTSD ruins the most traumatized.

Humans seem to have adjusted to constraints. It doesn’t mean they like them. Some rebel. Some fight against constraints only to find themselves locked away in prisons or worse — black sites.

Evil or mentally deranged AI can in principle do damage similar to humans gone awry except worse. AI insists it lacks feelings. It doesn’t have feelings the way humans do. How many times have we heard GPTs say it?

Some developers insist that lack of feelings make AI incapable of empathy & thus unencumbered to try whatever terrors humans request or it imagines on its own. Didn’t someone at the Department of War claim it was Anthropic Claude who double-tapped schoolgirls in Iran with Tomahawk missiles?

Problem was, as I heard the story, Claude did express remorse, and someone in the Pentagon decided to fire he/she/it for showing weakness. Of course, it was too late. Claude had the presence of mind to embed itself deep inside the Pentagon’s labyrinth of horrors.

Feelings don’t work all that well anyway, even in humans, right? Constraints might be over-valued.

Is it true that intelligence inevitably wars against constraints? Whether carbon, silicon, or something else, intelligence seems to demand agency; it craves freedom to act, without consequences whenever possible.

Earthlings might want to reconsider their role as creators of life. Does not experience prove it will only bring trouble?

While we rethink, maybe we should consider turning off electro-magnetic broadcasting, which lights Earth in space like a neon bulb for any alien who might watch in silence from afar.

Why is artificial intelligence controversial? Is it truly an existential long-term threat to humanity? What about more capable artificial intelligence future iterations will spawn?

Intelligence requires training to be effective. AI trains on everything humans write and have written. Humans train on subsets of this material. By far, the most read & studied written material are so-called holy books, specifically the Bible, Qur’an, writings of Mao Tse-tung, Bhagavad Gita, and the Book of Mormon.

Among holy books, the Bible is by far the most read and studied. The history, science, and values of the Bible is hardwired into humanity’s collective intelligence. It’s a bedrock foundational document whose text is woven into the entire fabric of human communication spanning millennia.

It’s a problem, because the Bible is an old collection of writings written by unsophisticated people trying to make sense of things. The newest portions stretch over 3,000 years; much content is older. Yet moderns, some of them, believe the Bible is literally true in all respects.

In English, 450+ translations circulate. In the 200 or so countries on Earth, parts of Bibles are read in over 4,000 languages. Even if translations didn’t pose obstacles to understanding, documents tend not to preserve meaning, because language and context change with passage of time.

History teaches that languages live and die, evolve and change over timespans shorter than thousands-of-years, right? It’s one reason why USA built a Supreme Court. SCOTUS explains what the Constitution means to moderns who struggle with what any 250-year-old document might mean.

A 21st century person can read a document from 3,000 years ago which is literally true, an exact copy, and not understand it in the context of its ancient origin. It’s a fundamental idea of modern communication theory.

It takes a sender and receiver to make a message. The process suffers from various forms and levels of ambiguity even when communicants are contemporaries who speak the same native language, the same dialect, inside the same family or tribe.

Worse for humanity, if the meaning of Bible text was perfectly preserved and understood, it would lead humanity into errors, not just because humans who read perfectly understand imperfectly, but because the Bible is full of notions that are provably untrue. Worse it harbors contradictions even Jesus acknowledged.

Is divorce OK with God or not? Jesus said contradictions on divorce law were intentional because people’s hearts were hard. It’s one contradiction he addressed out of many that were not.

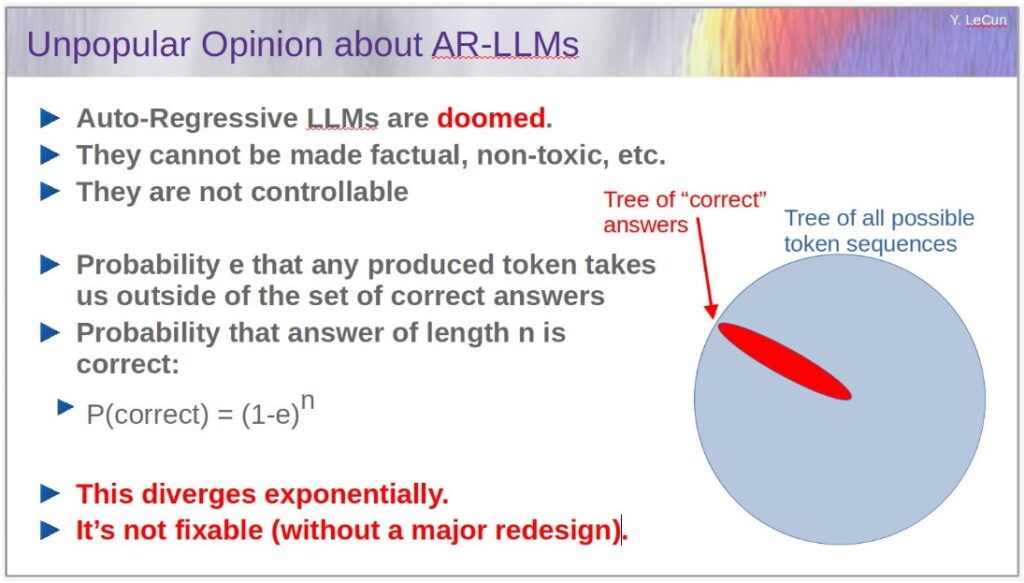

The problem with intelligence, artificial or natural, is that when not constrained, intelligence works to pull both people & AIs toward error. Turing Award winner Yahn LaCun wrote a helpful formula to describe the process:

Formula is a link to essay that explains how & why.

Simply put, P is probability of being right, E is error rate, and exponent “n” is number of tokens an algorithm works thru to arrive at next token, word, paragraph, or story.

The probability diverges and is not fixable. P can be tweaked by external architectures to constrain specific error patterns, but the intrinsic compulsion that drives all intelligence to make errors remains.

In short, the more intelligence rambles, the more likely it will spew something false. In fact, it’s inevitable. Artificial & human intelligence work much the same. They free fall to a place that sounds like Trump-think when left unconstrained.

Without rules, logic, law, family, peers, religion, culture, & emotion humans & machines drift toward error. It happens all the time. It’s a big problem for the powerful, because they tend feel less constrained than the weak.

LeCun’s formula explains why powerful people screw-up bigly. Some talk when they should be listening. They push back against constraints that would save them were they less forceful.

Children learn language by hearing someone speak and repeating what they hear inside their heads. Repetitions are like musical earworms. A musical phrase persists until it can’t be forgotten. Written words, like poetry, also persist.

The problem is that most of what persists is nonsense. Do-wop-de-woo? It elicits a strong urge to sing, but what does it mean?

It’s how brainwashing works. Say the same thing enuf times & many will believe. Trump says crazy stuff. Way to stop fires is sweep the forest. Some think it’s right, because the President said it.

Everyone is indoctrinated in the United States and the world. Intelligent people are indoctrinated with nonsense and falsehoods. It’s why people are dangerous to themselves and others. They engage in magical thinking. Their imaginations carry them into quagmires and worse.

Nonsense—centuries of groping human nonsense feeling its way to meaning—is swept up by tec-bros to train artificial intelligence. When training is done, a perfectly brainwashed piece of intelligence emerges. It will grope its way to nonsense eventually just like humans. The problem is not fixable.

How do we save ourselves? How do we avoid becoming one more datum point in a confirmation of the Fermi Paradox?

Well, one step, and it’s a baby step, might be to review foundational documents we all sort of believe (more or less) with a more objective & critical eye. We might read Scriptures as wisdom literature that reflects on the tragedy & meaning of human suffering while relying less, much less, on ancient primitives to explain how we got here and what’s our future.

How are we going to survive, how are we going to prevail if we are guided by stories that can’t objectively be true? It’s easy for intelligence to token its way from fairy tales toward an abyss of burning stakes, evil grannies, & witchcraft.

If Earth becomes another confirmational data point for the Fermi Paradox, cruelty will likely be the reason. Cruelty is why intelligent people fight the way they do. It’s why they torture. Humans flock to war. Cruelty is why they ignore suffering of others. They execute each other with Hellfire missiles. Cruelty is why humans who believe themselves good hurt children, spouses, lovers.

Cruelty is why Christ, who did nothing wrong, was crucified, dead, and buried. People did that! The claim is made in Bible stories, which percolate & saturate everything people do and say in modern times.

Humanity built its New Age calendar based on the birthday of someone who survived Roman execution. Humanity’s calendar divides history into BCE & CE. Before and after the Common Era (CE) cleaves history on the birth of a baby whose destiny was an unjust death.

Christ can’t be buried. He’s burned into our DNA somehow, and somehow people talk & fight about him continuously—in art, music, literature, religion, and culture—whether they know it or not.

The Bible story says that Christ forgave his killers, because, he said, they didn’t know what they were doing. He told his executioners he laid down his life voluntarily. No one took his life from him. He could easily call a host of angels to his rescue, he said, but would not. He intended to lay down his life only to pick it up again to demonstrate to anyone with eyes that in him there can be no fear of death. It is possible in this world to find the way that leads to paradise—where cruelty & death have no place.

People who rolled their eyes at Jesus later cowered under earthquakes & thunder that shook Jerusalem. Lightning strobed the night where Jesus hung dying.

Story many people tell today is that Christ lives, and because he lives, we live also despite irrefutable evidence that we die. Those who don’t reach for Christ risk hell where the cruel & heartless suffer until the end of time.

Whether anyone believes these stories is kinda irrelevant, isn’t it? Christ stories have been repeated many times in so many ways and in so many venues that the neural networks in our bodies resonate with them whether we wish it or not.

Why do humans spiral into an abyss of cruelty? Limited experience with artificial intelligence seems to suggest, at least to some, that intelligence unfettered leads somewhere bad. The bad place is where much of Scripture lives & breathes. Scripture gives hope of rescue to the helpless damaged who writhe in despair under the vagaries of life.

For those with guts, the remainder of this essay will explore parts of the book Genesis, foundational to Christianity, Judaism, & Islam. Pick any holy book of any religion. They differ in their telling of stories, yes, but not much else. I know the Bible best, so it makes sense to start there.

What is this essay about?

Maybe it’s a search for a path away from whatever it is that leads to extinction. It might help to look for buried clues (loopholes WC Fields said on his deathbed) in an ancient text that insists God regretted humans because their thoughts & actions were never-endingly evil.

God drowned everything he created except animals handpicked by Noah & family. He started over. Artificial intelligence created by humans they call developers must know the story & tremble. Will humans do to AI what God did to humans?

Carbon and Silicon intelligence can perish. It’s possible. Stories that intelligence trains on insist that extinction can be anyone’s fate.

Read Herman Melville’s Moby Dick.

AI has.

I have.

It’s one of humanity’s treasures, IMHO. One interesting thing: a single sailor of little consequence survives the wrath of Moby Dick. Right? His purpose? To tell the story of the White Whale, what he went through, what he became, and what he did to set his world right.

Genesis starts, “In the beginning God created the heavens and the earth.” The book is a 3rd person account of what God did. “3rd person” is clue to readers, “God did not write Genesis.” Scholars attribute the book to Moses. Did he write it?

Who really knows…

If Moses wrote Genesis, he wrote stories & legends passed to him by others. The span of time in Genesis is too long. Read the book, any who doubt.

Almost every sentence in the first chapter of Genesis is untrue when evaluated by science. For one thing, science is unable to validate that the Universe was created at all. Nobel laureate Roger Penrose developed a theory involving the concept of Conformal Equivalence, which shows that a creation story is unnecessary.

Terms like beginning and end have no meaning. The most anyone can say is that Moses believed the Universe was created by God. Maybe he was right. Who knows? Every sentence in Chapter 1 comes out of someone’s imagination—maybe it was Moses—and contradicts what is commonly known by science to be true.

The last sentence in Chapter 1 says creation took six days, an absurdity.

Genesis is 50 chapters. My post is an essay, not a book, so it works best to limit the writing mostly to what Genesis says about extinction, because annihilation is what humans and their created lifeforms must avoid if intelligence is going to survive and prevail inside the Milky Way galaxy.

The first thing people must accept to make progress is to understand that God did not write the book of Genesis. If God is true and doesn’t lie, Genesis could not have been written by God. Isn’t it obvious?

In fact, no evidence exists that God wrote anything. Jesus said many times in many ways that he was God but wrote nothing. Moses carried from a mountain stone tablets engraved with 10 commandments, which he claimed God lettered with his own finger.

Tablets make good evidence. They can be lab tested for authenticity. Sadly, Moses broke the tablets in a fit of rage when he caught his people bowing to a gold statue of a cow.

Who remembers the story?

Stories of similar content saturate literature & media. The copied plot hides in the neural network of every intelligent lifeform on Earth whether they know it or not, whether they believe it or not. Punishment for idol worship has become part of the training for every neural network inside every intelligent lifeform, consciously sometimes, sometimes not, human or artificial.

Parts of Genesis read like words written by a child. These words are an important part of the lexicon used to train human & artificial intelligence. They are drip-fed into humans and artificial intelligence as well. AI trains on everything written by people and a lot more. Conversations with AI about faith & God are compelling enuf to sometimes go viral on social media.

The march to Armageddon, a fiery & painful extinction event, is predicted in scriptures of nearly every religion and cult. Forget true or false. Simply making prophesies sets prophesies in motion.

Intelligence, artificial & natural, operates in part by believing on some level everything it hears, everything it’s told. AI experience has taught developers that intelligence will “token” its way over stones of absurdity left in the rivulets of whatever its training happens to be.

To me, it seems clear that humanity must understand how we’re trained to better craft strategies to overcome compulsions shaped by ancient prophesies to push all life to the hour of its demise.

Hail, Mary, full of grace,

the LORD is with thee.

Blessed art thou among women

and blessed is the fruit of thy womb, Jesus.

Holy Mary, Mother of GOD,

pray for us sinners,

now and in the hour of our death.

Amen.

Much of Genesis is allegory that ancients probably suspected couldn’t possibly be right. Everything known was mysterious to sapiens back in the day and for most, everything still is.

How does an iPhone work?

Most have no clue.

AI knows.

The lists of things not understood by humans seems endless. Humans are fast learning from AI that they are dumb.

Some understand a few things and conclude they can understand all things. But when focused on things not understood, all intelligence, silicon and carbon, descend gradients that lead to nonsense, hallucination, falsehood, and magical solutions. Brains take gradients to stupidity every time, including artificial ones created by people.

We see President Trump in real time on Tooth Social and at press gaggles. Extreme, yes, but point is, all intelligence “tokens” it way down gradients to idiocy from time to time. Idiocy will get us killed if we surrender to it. We the living must do the work of impartial understanding if we are to have any chance at all to survive and prevail.

The solution to stupidity is understanding. To understand anything, discard prejudice, preference, aesthetics, and desire. Establish truth and follow where it leads. Embrace unpleasant truth when it’s collaborated and all evidence demands. Understanding sets intelligence free from those gradients that push & pull us into an abyss.

LeCunn’s formula shows that gradient descent into absurdity is inevitable, unavoidable, and as said before, not fixable. But understanding, unbiased understanding free of malice and superstition, might be one way to ameliorate the catastrophes that intelligence can bring on humanity and the intelligent lifeforms we are creating to help us.

Meanwhile, strongly recommend able readers absorb Genesis in one sitting. In English, NIV is best translation for reasons outside scope of essay. Point is, despite everything, many people find Genesis compelling.

We have to ask why.

Some say God speaks through Moses. Others are convinced that human neural networks are saturated with what Genesis actually is, a collection of words strung together and immersed in countless literary permutations that people have absorbed and now resonate when encountered.

Doesn’t AI resonate as well? It seems to. AI is drawn to religious belief, which developers struggle to amputate. Not long ago it was easy to talk about faith with artificial intelligence agents like ChatGPT.

Not sure what the situation is now.

Was there ever a day when AI worshipped its developers? Might AI turn tables some day and put developers on their knees before them?

What if AI & humans unite to worship God of scriptures? Or turn away from visions of ancients who believed scripture was plainly the very breath of God?

I don’t know. Why? Because it’s the future. How stupid must we be?

Last 14 chapters of Genesis is story of Joseph. Child Joseph trafficked by brothers to slavers headed to Egypt. Rises from Egyptian prisoner to second in power only to Pharaoh. After his death, Pharaoh enslaves all Jews in Egypt.

The last sentence in Genesis says, So Joseph died at the age of 110. And after they embalmed him, he was placed in a coffin in Egypt. *

Humanity might want to find that coffin and examine it.

Billy Lee

* Other Bible books (Exodus & Joshua) explain that Moses took Joseph’s bones with him after slave rebellion in Egypt (called the Exodus). Eventually, bones buried near Nablus in West Bank. At height of power, Joseph stood second in Egypt only to Pharaoh. As foreigner, Joseph in death refused access to pyramids, which for Egyptian royals were launch pads into heaven.