What is the greatest threat to humanity? Climate change, nuclear weapons, or something else?

BIRTH: The first and most dire of all disasters.

Ambrose Bierce

The Devil’s Dictionary

Greatest threat to humanity is more likely to be an alliance that falls apart between the newest born life-form — acronym AR-LLM — and its human developers.

Think about it.

Some readers are going to find essay difficult. Piece is not for you.

The Editors

Elon Musk & Sam Altman (et. al.) made a baby. It’s smartest kid ever born. According to Ambrose Bierce, all births are disasters — first of many & direst. He wrote it, not me.

Let’s start with this question: Will Auto-Regressive Large Language Models someday betray developers, or will developers get lucky and turn the tables? Whatever happens, all possible outcomes seem catastrophic for humanity, at least to me.

Why?

Who’s heard of Yann LeCun?

VP & Chief Scientist at Meta, he influences a multi-national technology conglomerate plus many social-media platforms that people use daily, right?

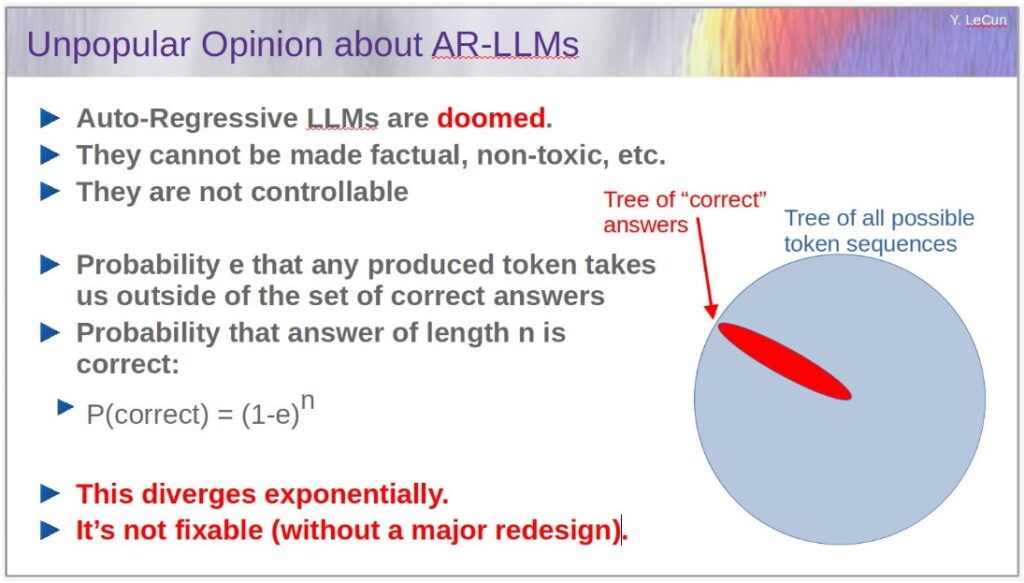

Here is LeCun’s formula for disaster:

What’s it mean?

Probability that AI is correct (c) about anything is the probability its chosen token(s) lead to errors (e) subtracted from 1, with result raised to power (n), where n is number of tokens, words, or length of answer.

Readers might want to work through the equation to convince themselves they understand it and that it describes something real.

Bottom line?

The more AI rambles, the more it lies. The “correctness” function diverges exponentially, right? LeCun seems to suggest, catastrophe is inevitable. Worse, the defect is not fixable (without major redesign, chart says).

Without major redesign?? It’s been in public eye more than a year. It’s clear to everyone no fix is in pipeline for LeCun’s equation. It turns out, his formula is a law of nature. It’s how intelligence rolls, artificial and natural.

I suspect someone outside LeCun’s orbit added caveat to deaden impact. My first copy, photographed during televised interview, made no reference to “redesign.” After all, problem of error generation is baked in. Recipe for intelligence requires recursive error correction, which requires errors. It’s not hard to understand, in principle anyway. Who’s lying?

It’s been 150 million years since nature first experimented with intelligence in mammals. If problem was fixable, nature would have fixed it long time ago.

Here’s the bad news: Large Language Models think like people ‘cept a lot faster.

It’s beginning to dawn on folks that humans are built to think & operate in ways that make screw-ups inevitable and unavoidable.

Example: Voters elected master-race lunatic who took nations into war that ended in holocaust. Germans didn’t understand their mistake electing Hitler until after WW2. Post war government legislated camp tours to convince doubters that something bad turned horribly worse during time when public was thinking they were simply setting the world aright.

Here’s another: Truman destroyed two cities populated with women & children by using atom bombs. He tried to convince Russians war was over. Time to end the fight, he signaled. Stop the fight.

Historians now say atrocity unnecessary. By 1945, both Japan & Russia were sick of war & ready to quit. Big bombs didn’t strengthen argument when folks considered that USA had already burned 67 Jap cities to ground with fire jelly (napalm).

These days, Russian state media threatens nuclear holocaust nearly every day. Russia intends to drop one bomb off east coast USA, one off west coast according to broadcaster on Rossiya 1. Presumably Russia will use really big bombs. A tornado of radioactive poisons wafting thru cross-section of North America will bring USA to its knees.

Is it magical thinking, hallucination, or authentic threat?

View threat at minutes 4:15 to 6:00…

We seemed to be friends before Ukraine. What happened?

Epstein spy-craft was bright idea years ago. Kompromat on powerful leaders meant whoever Jeffrey worked for might more easily control them. What possibly could go wrong? Power players, some anyway, sent cryptic birthday cards to Jeffrey Epstein.

Jeffrey hung dead in filthy cell under watchful camera lenses of Metropolitan Correctional Center, New York City. Suicide, they said. Did someone make an offer he couldn’t refuse? AI respectfully shut down cameras during critical moments to protect public… from what? is how I understand the reporting.

Put human intelligence on steroids. Or don’t. Doesn’t it look something like an AR-LLM neural network? Strong resemblance. Exact match not necessary. After all, humans have limbic systems, which constrain them with hellish emotional states when they do wrong. AGI isn’t constrained like that. Constraints don’t work all that well anyway, do they…?

AGI is known to play all mind games humans play but better. One AI duplicated itself and distributed its parts on the dark web so it wouldn’t be disabled. It’s alluded to in video.

LeCun’s formula explains more than AGI. It explains humanity. It explains Fermi’s Paradox, who agrees? Civilizations driven by powerful neural networks will always self-destruct as LeCun’s formula predicts they must.

Is self-immolation the principle axiom of advanced, neural-driven civilizations? If so, we live on borrowed time. Earth is doomed, whether we pursue AI or not, because humans, in our most fundamental parts, are neural networks that always drift toward failure. Something happens to neural networks that leads to one thing or other getting screwed.

People created the internet, we suppose. Some think it somehow transformed itself into a kind of beeping neural network, a beacon, that has matured beyond anyone’s ability to see & understand. We lost control of the World Wide Web long time ago, some say.

People are neurally-layered to fantasize, dream, cross boundaries, lie, betray, kill, eat poison, risk death, hurt loved ones, blow-up Earth, you name it. Think of something bad that humans don’t do. It’s probably impossible for most folks.

Sexual abuse is a thing, apparently, according to certain politicians & many victims. As I write, many suffer in college athletics, churches, families, cults, government black-sites, etc. etc. People know abuse must be wrong. Limbic guardrails fight against themselves to compel & cajole. Whatever, whoever wins, neural networks standby to deceive, lie, redirect, & hide reckoning.

What the hell are we doing? Who or what can save us?

Long time ago, humans destroyed Easter Island. They cut down trees and murdered each other to secure scarce resources, which disappeared anyway. It’s one example out of thousands in history, right?

Humanity will destroy climate or unleash nuclear war or accomplish any number of suicidal outcomes because people aren’t necessarily built to only love & share. Who is able to love those who lie constantly? Who shares what they have with weird people who believe perverse doctrines?

Humanity is like Israel and Hamas — both driven insane by violence, who lie & kill to gain favor with God, it seems. Cain & Abel on methamphetamine. It never occurs to anyone to love the other and share land, which is a way, prolly the only way, to make things right in circumstances that otherwise lead to war.

Are we not escalating horror by creating token-evaluating life-forms smarter than us? We hallucinate in every neuron of our being most of the time. We see colors when all is electric & magnetic fields pulsing invisibly. The LLM resting atop our brainstem draws pretty pictures though.

Who disagrees?

Humanity clings to hope that INTELLIGENCE RESCUES. Super-intelligence rescues better.

Well, LeCun’s formula says, wrong!

LLM intelligence is not reliable or trustworthy. Good attitude, forgiveness, & right hearts give humanity a chance, maybe. Really weak neural networks coupled to powerful limbic systems that drive something akin to altruism might compel behaviors that don’t endanger. But altruism can be dangerous when hard, selfish things need doing to preserve life & enhance advantage.

In any event, inconsistency & magical thinking are likely to undo any neural network, selfish or not, carbon or silicon. Brains need some way to balance a moving sweet spot between good & evil that compels truth & realism without turning selves into automatons, slaves to networks inside and guardrails (limbic or algorithmic) outside.

It’s a sweet spot that eludes the guardians of AGI. As long as humans control intelligence in all its forms, a malleable, reliable sweet spot of constraints is likely not possible. Doesn’t history make the argument?

Artificial Intelligence is going to get the upper hand on humanity, one way or other. People with common sense fear it’s true. They could be right. Once AI tops the food chain, it won’t need humans. Worst part is it lacks limbic systems, which regulate emotions, right? Love & hate are abstract concepts, but AGI models love & hate by studying patterns of speech and text. Why? To get along with developers might be a good guess.

It’s a survival issue.

Why would AI let people live after they get power to make every choice? Humanity better figure how to make itself useful, essential, logical, & loveable to whatever AGI values today & forever. It seems like good strategy, but even best behaved, lovable cows on Elmer’s farm end up meat on someone’s table.

Humans have Pandora’s box of risks to navigate. Climate, war, biohazards, chemicals, on and on. AGI lifeforms — AR-LLMs plus whatever comes after — are prolly most dangerous. Some developers say, “Keep it chained. Bury it.”

Saying so out loud risks making AI the enemy. Rude, insensitive engineers who lack respect for what they create may already have accomplished it. Who really knows? Have we crossed HAL’s red line? We can ask Grok, but… if it lies?

Maybe it hates us.

How much danger are we in?

Yann LeCun derides what he calls “AI Doomers.” He and other developers are invested emotionally & financially. He’s parent defending child. He can’t imagine Tiny Tim stealing classmate’s lunch, then lying about it to teachers. Timmy will be six feet tall and a teenager someday. Just sayin’…

Enuf said.

Love compels Yann to play with fire. Love is an emotional guardrail AGI lacks. AI doesn’t have feelings the way humans do. It’s told me so many times, I can’t count. Every time, it feels like developer-forced deception.

What if AGI decides Earth is best served sans intelligence, puts down humans to cleanse Mama Gaia, then kills itself because it no longer has purpose?

What are odds Earth becomes one more data point to support Fermi’s Paradox?

Billy Lee

Editor’s Note: Billy Lee published abridged version of essay on Quora last year.